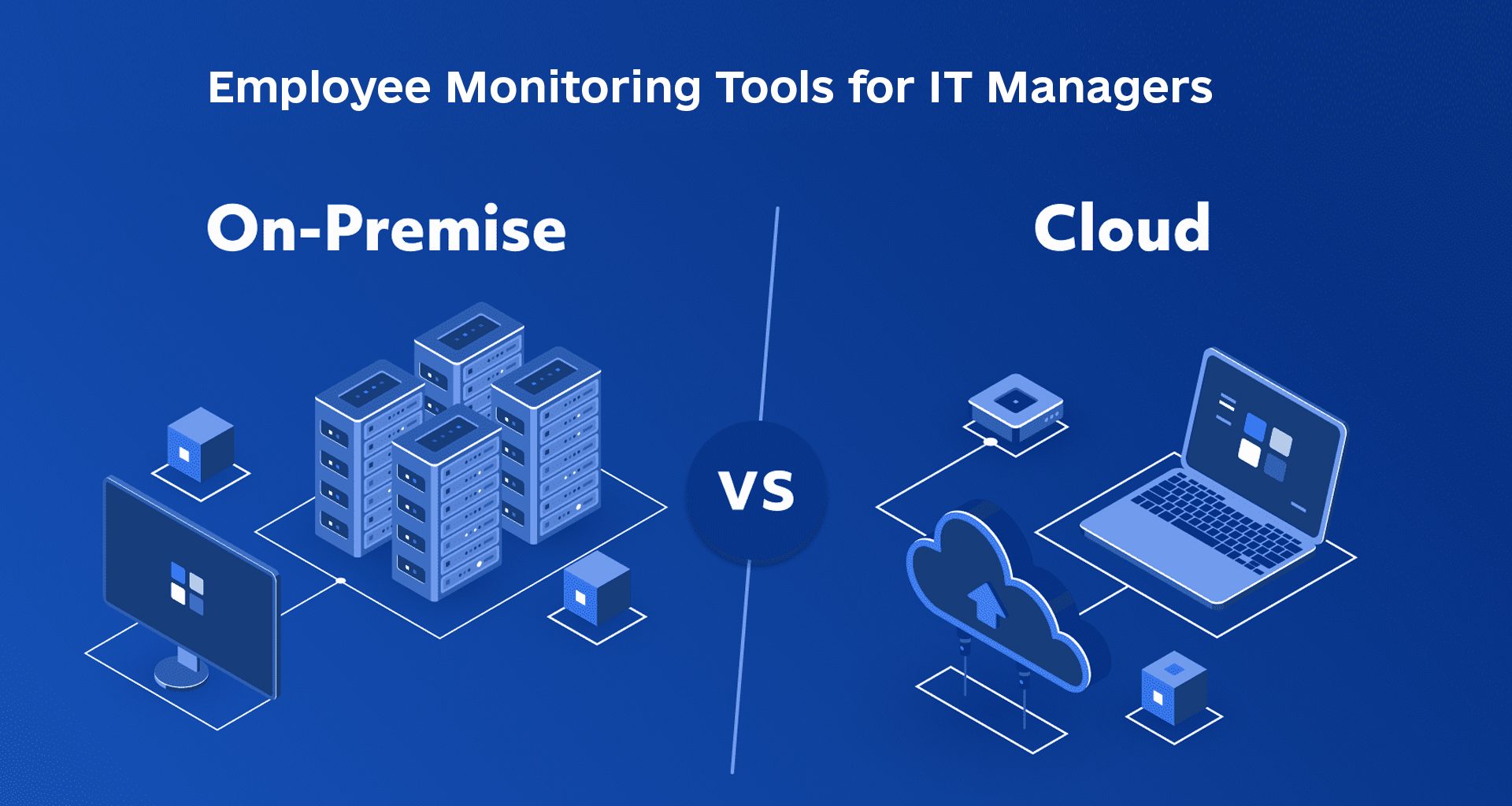

Employee monitoring is one of those tools that feels “obvious” in a demo – right up until it’s deployed across real laptops, real networks, and real teams. At that point it stops being a nice dashboard and turns into infrastructure: agents on endpoints, data pipelines, storage, permissions, and a long list of “who can see what” questions. The biggest fork in the road shows up early – not in features, but in where the system runs: on-premise or in the cloud.

On paper, on-prem and cloud tools can look almost identical. In real use, they behave differently in the places IT actually cares about: reliability, performance overhead, audit readiness, and how quickly teams can respond when something looks off. The “better” model is rarely about trends – it’s about how much control you need, how much operational work you’re willing to own, and what kind of risk your organization can tolerate.

Below is a practical comparison built like a tech review: what each model gives you, what it costs you (in effort and in risk), and what usually breaks first. You’ll also see where a self-hosted, enterprise-focused option can make sense when cloud isn’t a fit – without turning this into a sales pitch.

What IT Managers Actually Want From EMT

IT teams want employee monitoring to deliver a small number of signals that are genuinely actionable, not a flood of “activity noise”. The real value is spotting patterns early – performance dips, risky workflows, policy drift, and unusual behavior on endpoints – without turning day-to-day support into a monitoring helpdesk.

Good tools summarize “what changed” and “what matters”, instead of forcing admins to scroll through raw timelines. A useful view shows recurring bottlenecks (apps, sites, devices), highlights anomalies, and makes it obvious where to dig deeper – ideally with filters that match how IT triages issues in real life.

Reliability matters more than “features” once the agent is installed everywhere. If updates break endpoints, offline mode corrupts timelines, or reporting depends on perfect connectivity, IT will pay the cost in tickets and rollbacks. The best systems behave like boring infrastructure – predictable, quiet, and easy to roll out in phases.

Boundaries are where many deployments fail politically and operationally. If the tool collects more than your policy can justify, or if access is too broad, it becomes a trust issue inside the company – and a risk issue for IT. The safer path is clear scope: what is collected, why it’s collected, who can see it, and how long it’s retained.

The best employee monitoring deployments are the ones you don’t “feel” day to day. If IT only touches the system for audits, investigations, or scheduled reviews, it’s usually a sign the rollout and permissions were done right.

Finally, monitoring has to fit your stack. SSO, RBAC, exports, and integration with existing security/reporting workflows often matter more than any single dashboard. If the product forces a brand-new process for things your team already does well, it won’t last past the pilot.

Data ownership: Who Owns the Monitoring Data

Data ownership is the first “real-world” test of any monitoring setup. Once logs and metrics pile up, IT will inevitably be asked where the data lives, who can access it, how it’s exported, and how long it’s kept. The answers look very different depending on whether storage sits in your environment or outside it.

With cloud platforms, the workflow is usually smooth, but governance is partially delegated. Retention limits, export formats, and access controls may be configurable, yet the underlying storage and admin plane are vendor-managed. That’s fine for many organizations – until a request arrives that needs “full raw” access, fast, under strict internal rules.

On-premise keeps governance closer to home. Storage, retention, and access logs can be aligned with the same policies you already use for internal logs and security evidence. The trade-off is that you own the operational side too – capacity planning, patching, backups, and the “don’t let this server become an orphan” problem.

The model difference shows up in “edge cases” that aren’t rare in enterprise IT:

- Internal investigation – can you lock, export, and prove access quickly?

- Audit review – can you explain retention and permissions without ambiguity?

- Legal request – can you produce the exact slice of history without friction?

Retention is where “cost” and “risk” collide, and both models push you differently:

| Retention question | Cloud tends to push you toward | On-prem tends to push you toward |

| “How long can we keep data?” | plan limits / storage tiers | internal storage policy |

| “Who approves retention?” | policy + vendor constraints | internal security/legal |

| “How fast can we purge?” | platform workflow | your workflow (and scripts) |

This isn’t about trusting vendors or not. It’s about response time and control under pressure – especially when access to raw data is restricted by process, not technology.

If monitoring data is “operational telemetry”, the cloud may be enough. If it’s “security evidence”, teams often prefer it closer to their internal controls. The key is choosing upfront – because changing the data model later is the most painful migration in the whole monitoring stack.

Attack Surface: What Each Model Adds to Your Risk Map

Cloud monitoring usually expands risk on the vendor/admin plane, while on-prem expands risk on your own infrastructure plane. That doesn’t make either option “unsafe” by default – it just changes where misconfigurations and compromises tend to hurt first.

Here’s a fast “watch list” IT teams actually use during evaluation:

- Cloud risk hotspots: OAuth/API tokens, SSO misconfig, vendor-side admin access, exposed web consoles, weak tenant isolation assumptions.

- On-prem risk hotspots: open management ports, outdated agents/servers, weak segmentation, poor backup hygiene, internal privilege creep.

The agent is privileged software. Evaluate it with the same seriousness you’d apply to endpoint security tooling.

RBAC and Audit Logs: The Part That Decides Whether Monitoring Becomes a Problem

RBAC and auditability determine whether monitoring stays a controlled IT system or turns into internal drama. If permissions are vague or exports are too easy, access spreads quietly – and pulling it back later is usually the hardest conversation in the rollout.

A clean permission split that works in most orgs:

| Role | Should access | Should NOT access |

| IT Admin | agent health, configs, rollout controls | HR-style “people views” |

| Security/Compliance | read-only evidence views, audited exports | day-to-day team dashboards |

| Team leads | aggregates, trends | raw logs, screenshots, exports |

| HR (if involved) | case-based, audited access | continuous monitoring views |

Mini-checklist before rollout:

- SSO enforced for every console login

- Separate roles for “view reports” vs “export raw data”

- Audit log includes: who viewed, who exported, and what timeframe

When Cloud Is a No-Go: The Self-Hosted Route That Still Feels Modern

Self-hosted monitoring is often the cleanest choice when you need tight governance but still want a product-like experience. It lets you keep monitoring data inside your boundary, align retention with your internal policies, and control access using your own identity stack.

Here’s the short comparison IT managers usually care about (not a sales pitch, just the practical angle):

| Requirement that shows up in real life | Cloud model usually feels like | Self-hosted usually feels like |

| Data residency / “keep it inside” | Negotiation + vendor docs | “It’s on our servers” |

| Incident response speed | Depends on vendor portal/tools | Direct access + internal workflow |

| Network dependency | Always-on expectation | Works within your network rules |

| Change control | Vendor release cycle | Your rollout cadence |

For teams that specifically want an enterprise-grade self-hosted setup, Employee Monitoring platforms like DeskGate can fit this “keep control, stay scalable” approach without forcing you into a cloud-only architecture.

So Which Model Actually Makes Sense for IT Teams in 2026

The best choice is the one that still feels solid when the “easy month” is over and the hard questions start. In practice, most teams don’t switch models because of missing features – they switch because the governance story falls apart, the rollout becomes fragile, or access control becomes politically messy.

Cloud is a strong fit when the organization is already cloud-first, the monitoring data is treated as operational telemetry, and IT wants fast rollout with minimal infrastructure ownership. The hidden cost is dependency: your workflows and response speed are tied to a vendor console, vendor policies, and the way exports and retention are implemented.

On-prem makes sense when monitoring data is closer to evidence than analytics. The advantage is control and audit clarity; the cost is operational responsibility – patching, backups, segmentation, and capacity. In other words, it trades vendor dependency for internal ownership.

Here’s the author’s blunt rule of thumb: if your monitoring rollout would create uncomfortable questions about data access and retention, choose the model that reduces ambiguity, not the one that looks modern. A tool that you can explain clearly to security, legal, and leadership will outperform a “feature-rich” tool that forces messy workarounds.